When Do You Consider Log Diterminants Similar

Muz Play

Mar 31, 2025 · 5 min read

Table of Contents

When Do You Consider Log Determinants Similar?

Log determinants, often encountered in statistics, machine learning, and information theory, represent the logarithm of the determinant of a matrix. Understanding when two log determinants are considered "similar" is crucial for various applications, ranging from model selection to assessing the robustness of statistical methods. This similarity isn't a straightforward numerical comparison; it depends heavily on the context and the specific application. This article delves into the nuances of comparing log determinants, examining different scenarios and the criteria for judging similarity.

Understanding Log Determinants and Their Context

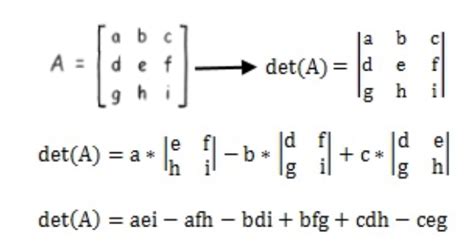

Before diving into similarity, let's solidify our understanding of log determinants. The determinant of a square matrix, denoted as |A| or det(A), is a scalar value reflecting properties like the matrix's invertibility (a non-zero determinant implies invertibility) and the volume scaling factor under linear transformations. The log determinant, log|A|, is simply the natural logarithm of the determinant. Its use is advantageous because:

-

Numerical Stability: Determinants can become extremely large or small, leading to numerical overflow or underflow in computations. The logarithm scales these values, improving numerical stability.

-

Additivity: The log determinant of a product of matrices is the sum of their individual log determinants: log|AB| = log|A| + log|B|. This property simplifies calculations and interpretations in many applications.

-

Information Theory: In information theory, the log determinant often appears in expressions related to entropy and mutual information, making it a fundamental quantity.

-

Gaussian Processes: In Gaussian process regression and other machine learning applications, the log determinant of covariance matrices plays a pivotal role in calculating likelihoods and model evidence.

Defining "Similarity" for Log Determinants: A Multifaceted Perspective

The notion of "similarity" between two log determinants, log|A| and log|B|, isn't absolute. It depends significantly on the context and the goals of the analysis. Here are several perspectives:

1. Absolute Difference Threshold: A Simple Approach

The simplest method is to define a threshold, ε, and consider log|A| and log|B| similar if |log|A| - log|B|| < ε. This approach is straightforward but has limitations:

-

Scale Dependence: The choice of ε is crucial and highly context-dependent. A threshold suitable for one application might be inappropriate for another. The scale of the determinants significantly impacts the interpretation of the absolute difference.

-

Lack of Statistical Significance: This method doesn't consider the inherent variability or uncertainty associated with the log determinants. Small differences might be statistically insignificant, while larger differences might reflect genuine variations.

2. Relative Difference Threshold: Accounting for Magnitude

To address the scale-dependence issue, we can use a relative difference threshold:

|log|A| - log|B|| / max(|log|A||, |log|B|) < ε

This approach considers the relative difference in log determinants, making it less sensitive to the magnitude of the values. However, it still requires careful selection of ε, and it doesn't incorporate statistical significance.

3. Statistical Hypothesis Testing: Incorporating Uncertainty

A more robust approach involves statistical hypothesis testing. This requires assumptions about the distribution of the log determinants. If we assume that the log determinants are normally distributed, we can perform a t-test or a z-test to assess whether the difference between them is statistically significant. This approach is superior to the threshold methods because it accounts for the uncertainty inherent in estimating log determinants.

-

Assumptions: The validity of this approach hinges on the validity of the normality assumption. In many cases, this assumption might not be realistic, and alternative non-parametric tests might be necessary.

-

Sample Size: The power of the hypothesis test is influenced by the sample size used to estimate the log determinants. Larger samples generally lead to more powerful tests.

4. Kullback-Leibler (KL) Divergence: For Probabilistic Models

In the context of probabilistic models, the Kullback-Leibler divergence provides a measure of the difference between two probability distributions. If the matrices A and B represent covariance matrices of Gaussian distributions, the KL divergence can be expressed in terms of their log determinants and inverses. A small KL divergence suggests that the two distributions are similar.

-

Asymmetry: The KL divergence is not symmetric: KL(P||Q) ≠ KL(Q||P). The interpretation depends on which distribution is chosen as the reference.

-

Computational Complexity: Calculating the KL divergence can be computationally expensive, especially for high-dimensional matrices.

5. Matrix Distance Metrics: Beyond Log Determinants

Sometimes, the focus shifts from comparing the log determinants themselves to comparing the matrices that generate them. Various matrix distance metrics, like the Frobenius norm or the spectral norm, can be used to compare matrices A and B directly. If the matrices are similar in terms of these metrics, it is plausible that their log determinants will also be similar.

-

Indirect Comparison: This is an indirect approach, but it can provide valuable insights into the similarity of underlying structures.

-

Computational Cost: Depending on the dimension of the matrices, computing some matrix norms might be computationally expensive.

Practical Examples and Applications

The choice of method for comparing log determinants depends heavily on the application.

-

Model Selection in Gaussian Processes: When comparing different Gaussian process models, the log-likelihood, often involving log determinants of covariance matrices, is used. Here, a Bayesian approach involving model comparison using Bayes factors or comparing log marginal likelihoods would be appropriate. Statistical significance plays a major role.

-

Dimensionality Reduction: In techniques like principal component analysis (PCA), the log determinant of the covariance matrix is related to the retained variance. Here, comparing log determinants might help evaluate the information loss due to dimensionality reduction. Relative difference thresholds or KL divergence could be applicable.

-

Robustness Analysis: If the log determinant of a matrix is used as a measure of stability or robustness of a system, a simple absolute difference threshold might be sufficient, provided the threshold is carefully chosen based on the context and acceptable error levels.

Conclusion: Choosing the Right Approach

Determining when two log determinants are considered similar is not a trivial task. The appropriate method depends heavily on the specific application, the context of the analysis, and the desired level of rigor. While simple thresholding methods offer straightforward comparisons, they lack the statistical rigor of hypothesis testing and might be sensitive to the scale of the determinants. Statistical hypothesis testing provides a more robust approach, especially when uncertainty needs to be considered. For probabilistic models, the KL divergence provides a suitable measure of similarity between distributions, indirectly reflecting the similarity of log determinants. Ultimately, the choice of method should be guided by the specific research question and the nature of the data. A thorough understanding of the underlying assumptions and limitations of each method is essential for a reliable and meaningful comparison of log determinants.

Latest Posts

Latest Posts

-

Physical Features Map Of South America

Apr 03, 2025

-

What Is The Basic Idea Behind Disengagement Theory

Apr 03, 2025

-

Is Bromine A Good Leaving Group

Apr 03, 2025

-

Five Blind Men And The Elephant

Apr 03, 2025

-

Absorption Spectrum Chlorophyll A And B

Apr 03, 2025

Related Post

Thank you for visiting our website which covers about When Do You Consider Log Diterminants Similar . We hope the information provided has been useful to you. Feel free to contact us if you have any questions or need further assistance. See you next time and don't miss to bookmark.