Chi Squared Goodness Of Fit Vs Independence

Muz Play

Apr 01, 2025 · 6 min read

Table of Contents

- Chi Squared Goodness Of Fit Vs Independence

- Table of Contents

- Chi-Squared Goodness of Fit vs. Independence: A Comprehensive Guide

- Understanding the Chi-Squared Distribution

- Chi-Squared Goodness-of-Fit Test: Does the Data Fit the Expected Distribution?

- Steps Involved in Performing a Goodness-of-Fit Test:

- Example: Testing for a Fair Die

- Chi-Squared Test of Independence: Are Two Categorical Variables Related?

- Steps Involved in Performing a Test of Independence:

- Example: Relationship Between Smoking and Lung Cancer

- Key Differences Between Goodness-of-Fit and Independence Tests

- Assumptions and Limitations of Chi-Squared Tests

- Conclusion: Choosing the Right Chi-Squared Test

- Latest Posts

- Latest Posts

- Related Post

Chi-Squared Goodness of Fit vs. Independence: A Comprehensive Guide

The chi-squared (χ²) test is a powerful statistical tool used to analyze categorical data. However, it manifests in two primary forms: the goodness-of-fit test and the test of independence. While both utilize the same fundamental chi-squared distribution, they address distinct research questions and require different interpretations. This comprehensive guide will delve into the nuances of each test, highlighting their similarities, differences, and practical applications.

Understanding the Chi-Squared Distribution

Before diving into the specifics of each test, let's briefly review the chi-squared distribution itself. This probability distribution is characterized by its degrees of freedom (df), a parameter that dictates its shape. The higher the degrees of freedom, the less skewed the distribution becomes. The chi-squared statistic, denoted as χ², measures the discrepancy between observed frequencies and expected frequencies. A large χ² value indicates a significant difference between observed and expected values, suggesting a potential lack of fit or dependence.

Chi-Squared Goodness-of-Fit Test: Does the Data Fit the Expected Distribution?

The chi-squared goodness-of-fit test assesses how well observed data conforms to a hypothesized distribution. It answers the crucial question: Does the sample data originate from a population with the specified distribution? This test is particularly useful when dealing with categorical variables and comparing observed frequencies to expected frequencies based on a theoretical model or prior knowledge.

Steps Involved in Performing a Goodness-of-Fit Test:

-

State the Hypotheses:

- Null Hypothesis (H₀): The observed data follows the specified distribution.

- Alternative Hypothesis (H₁): The observed data does not follow the specified distribution.

-

Determine Expected Frequencies: Based on the hypothesized distribution (e.g., uniform, binomial, Poisson), calculate the expected frequencies for each category. The total number of observations should be the same for both observed and expected frequencies.

-

Calculate the Chi-Squared Statistic: Use the formula:

χ² = Σ [(Observed Frequency - Expected Frequency)² / Expected Frequency]

This formula sums the squared differences between observed and expected frequencies, weighted by the expected frequencies.

-

Determine Degrees of Freedom: The degrees of freedom are calculated as:

df = k - p - 1

where 'k' is the number of categories and 'p' is the number of parameters estimated from the data (often 0 for standard distributions).

-

Find the p-value: Using the calculated χ² value and degrees of freedom, consult a chi-squared distribution table or statistical software to find the p-value. The p-value represents the probability of observing the obtained χ² value (or a more extreme value) if the null hypothesis were true.

-

Make a Decision:

- If the p-value is less than the significance level (typically 0.05), reject the null hypothesis. This indicates that the observed data significantly deviates from the hypothesized distribution.

- If the p-value is greater than the significance level, fail to reject the null hypothesis. There is not enough evidence to conclude that the observed data differs significantly from the hypothesized distribution.

Example: Testing for a Fair Die

Let's say we roll a six-sided die 60 times and observe the following frequencies: 10, 8, 12, 15, 9, 6. We want to test if the die is fair (i.e., follows a uniform distribution).

- H₀: The die is fair (uniform distribution).

- H₁: The die is not fair.

The expected frequency for each side is 60/6 = 10. Applying the chi-squared formula, we calculate the χ² statistic. Comparing this to the critical value from the chi-squared distribution (with df = 5), and obtaining a p-value, we can then decide whether to reject or fail to reject the null hypothesis.

Chi-Squared Test of Independence: Are Two Categorical Variables Related?

The chi-squared test of independence investigates whether two categorical variables are associated or independent. It asks: Is there a relationship between these two variables? This test examines the relationship between two or more categorical variables by comparing the observed frequencies in a contingency table to the expected frequencies if the variables were independent.

Steps Involved in Performing a Test of Independence:

-

State the Hypotheses:

- Null Hypothesis (H₀): The two categorical variables are independent.

- Alternative Hypothesis (H₁): The two categorical variables are dependent (associated).

-

Create a Contingency Table: Organize the observed frequencies into a contingency table, showing the counts for each combination of categories across the two variables.

-

Calculate Expected Frequencies: For each cell in the contingency table, calculate the expected frequency under the assumption of independence:

Expected Frequency = (Row Total * Column Total) / Grand Total

-

Calculate the Chi-Squared Statistic: Use the same formula as in the goodness-of-fit test:

χ² = Σ [(Observed Frequency - Expected Frequency)² / Expected Frequency]

-

Determine Degrees of Freedom: The degrees of freedom are calculated as:

df = (Number of Rows - 1) * (Number of Columns - 1)

-

Find the p-value: Similar to the goodness-of-fit test, use the χ² value and degrees of freedom to find the p-value.

-

Make a Decision: Interpret the p-value as described in the goodness-of-fit test. A p-value less than the significance level leads to the rejection of the null hypothesis, suggesting a statistically significant relationship between the variables.

Example: Relationship Between Smoking and Lung Cancer

Suppose we have data on smoking habits (smoker/non-smoker) and lung cancer diagnosis (yes/no). We can create a contingency table showing the observed frequencies. We then calculate the expected frequencies under the assumption of independence between smoking and lung cancer. The chi-squared statistic, degrees of freedom, and p-value are then calculated, and a decision is made based on the p-value and significance level.

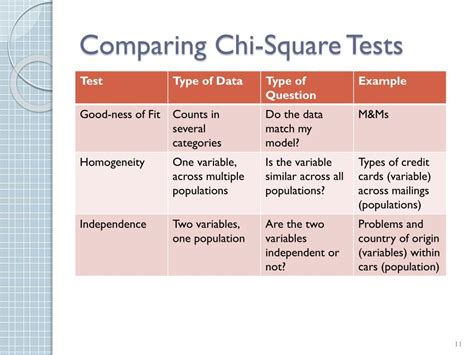

Key Differences Between Goodness-of-Fit and Independence Tests

| Feature | Goodness-of-Fit Test | Test of Independence |

|---|---|---|

| Objective | Assess conformity to a hypothesized distribution | Determine association between two categorical variables |

| Data Type | One categorical variable | Two categorical variables |

| Hypotheses | H₀: Data follows the hypothesized distribution | H₀: Variables are independent |

| Expected Freq. | Derived from the hypothesized distribution | Calculated from row and column totals |

| Contingency Table | Not used | Used |

Assumptions and Limitations of Chi-Squared Tests

Both goodness-of-fit and independence tests have several assumptions:

- Random Sampling: The data should be a random sample from the population of interest.

- Expected Frequencies: Expected frequencies in each cell should be sufficiently large (generally ≥ 5). If this assumption is violated, Fisher's exact test or other alternative tests might be more appropriate.

- Independence of Observations: Observations should be independent of each other.

Conclusion: Choosing the Right Chi-Squared Test

Choosing between the chi-squared goodness-of-fit and independence tests hinges on the research question. If you're evaluating how well your data aligns with a specific distribution, use the goodness-of-fit test. If you're investigating the relationship between two categorical variables, the test of independence is the appropriate choice. Understanding the underlying principles and assumptions of each test is crucial for accurate interpretation and drawing valid conclusions from your data analysis. Remember to always consider the limitations and potential alternative tests if the assumptions are violated. Proper application of these tests provides valuable insights into categorical data, enabling researchers to uncover patterns and relationships within their datasets. Careful consideration of the p-value and effect size further enhances the reliability and interpretability of the results. Ultimately, the chi-squared test remains a fundamental tool in statistical analysis, aiding in understanding complex relationships within categorical data.

Latest Posts

Latest Posts

-

Quantum Mechanical Model Vs Bohr Model

Apr 05, 2025

-

Organisms That Make Their Own Food Are Called Autotrophs Or

Apr 05, 2025

-

What Are Rights And Responsibilities Of Citizens

Apr 05, 2025

-

What Is Sigma Factor In Transcription

Apr 05, 2025

-

Is Hbr An Acid Or Base

Apr 05, 2025

Related Post

Thank you for visiting our website which covers about Chi Squared Goodness Of Fit Vs Independence . We hope the information provided has been useful to you. Feel free to contact us if you have any questions or need further assistance. See you next time and don't miss to bookmark.